Machine learning developers gained new abilities to develop and run their ML programs on the framework and hardware of their choice thanks to the OpenXLA Project, which today announced the availability of key open source components.

Data scientists and ML engineers often spend a lot of time optimizing their models to work on each hardware target. Whether they’re working in a framework like TensorFlow or PyTorch and targeting GPUs or TPUs, there was no way to avoid this manual effort, which consumed precious time and made it difficult to move applications at a later date.

This is the general problem targeted by the folks behind the OpenXLA Project, which was founded last fall and today includes Alibaba, Amazon Web Services, AMD, Apple, Arm, Cerebra Systems, Google, Graphcore, Hugging Face, Intel, Meta, and NVIDIA as its members.

By creating a unified machine learning compiler that works with a range of ML development frameworks and hardware platforms and runtimes, OpenXLA can accelerate the delivery of ML applications and provide greater code portability.

Today, the group announced the availability of three open source tools as part of the project. XLA is an ML compiler for CPUs, GPUs, and accelerators; StableHLO is an operation set for high-level operations (HLO) in ML that provides portability between frameworks and compilers; while IREE (Intermediate Representation Execution Environment) is an end-to-end MLIR (Multi-Level Intermediate Representation) compiler and runtime for mobile and edge deployments. All three are available for download from the OpenXLA GitHub site

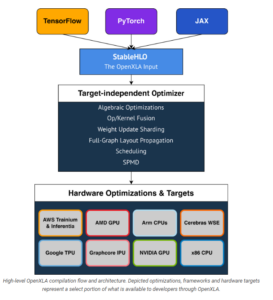

Initial frameworks supported by OpenXLA including TensorFlow, PyTorch, and JAX, a new Google framework JAX is designed for transforming numerical functions, and is described as bringing together a modified version of autograd and TensorFlow while following the structure and workflow of NumPy. Initial hardware targets and optimizations include Intel CPU, Nvidia GPUs, Google TPUs, AMD GPU, Arm CPUs, AWS Trainium and Inferentia, Graphcore’s IPU, and Cerebras Wafer-Scale Engine (WSE). OpenXLA’s “target-independent optimizer” targets albebraic functions, op/kernel fusion, weight update sharding, full-graph layout propagation, scheduling, and SPMD for parallelism.

The OpenXLA compiler products can be used with a variety of ML use cases, including full-scale training of massive deep learning models, including large language models (LLMs) and even generative computer vision models like Stable Diffusion. It can also be used for inference; Waymo already uses OpenXLA for real-time inferencing on its self-driving cars, according to a post today on the Google open source blog.

The OpenXLA compiler ecosystem provides portability between ML development tools and hardware targets (Image source OpenXLA Project)

OpenXLA members touted some of their early successes with the new compiler. Alibaba, for instance, says it was able to train a GPT2 model on Nvidia GPUs 72% faster using OpenXLA, and saw an 88% speedup for a Swin Transformer training task on GPUs.

Hugging Face, meanwhile, said it saw about a 100% speedup when it paired XLA with its text generation model written in TensorFlow. “OpenXLA promises standardized building blocks upon which we can build much needed interoperability, and we can’t wait to follow and contribute!” said Morgan Funtowicz, head of machine learning optimization for the Brooklyn, New York, company.

Facebook was able to “achieve significant performance improvements on important projects,” including using XLA on PyTorch models running on Cloud TPUs, said Soumith Chintala, the lead maintainer for PyTorch.

The chip startups are pleased for XLA, which reduces the risks of adopting relatively new, unproven hardware for customers. “Our IPU compiler pipeline has used XLA since it was made public,” said David Norman, Graphcore’s director of software design. “Thanks to XLA’s platform independence and stability, it provides an ideal frontend for bringing up novel silicon.”

“OpenXLA helps extend our user reach and accelerated time to solution by providing the Cerebras Wafer-Scale Engine with a common interface to higher level ML frameworks,” says Andy Hock, a vice president and head of product at Cerebras. “We are tremendously excited to see the OpenXLA ecosystem available for even broader community engagement, contribution, and use on GitHub.”

AMD and Arm, which are battling bigger chipmakers for pieces of the ML training and serving pies, are also happy members of the OpenXLA Project.

“We value projects with open governance, flexible and broad applicability, cutting edge features and top-notch performance and are looking forward to the continued collaboration to expand open source ecosystem for ML developers,” Alan Lee, AMD’s corporate vice president of software development, said in the blog.

“The OpenXLA Project marks an important milestone on the path to simplifying ML software development,” said Peter Greenhalgh, vice president of technology and fellow at Arm. “We are fully supportive of the OpenXLA mission and look forward to leveraging the OpenXLA stability and standardization across the Arm Neoverse hardware and software roadmaps.”

Curiously absent are IBM, which continues to innovate on chips with its Power10 processor, and Microsoft, the world’s second largest provider behind AWS.

Related Items:

Google Announces Open Source ML Compiler Project, OpenXLA

AMD Joins New PyTorch Foundation as Founding Member

Inside Intel’s nGraph, a Universal Deep Learning Compiler

June 13, 2025

- PuppyGraph Announces New Native Integration to Support Databricks’ Managed Iceberg Tables

- Striim Announces Neon Serverless Postgres Support

- AMD Advances Open AI Vision with New GPUs, Developer Cloud and Ecosystem Growth

- Databricks Launches Agent Bricks: A New Approach to Building AI Agents

- Basecamp Research Identifies Over 1M New Species to Power Generative Biology

- Informatica Expands Partnership with Databricks as Launch Partner for Managed Iceberg Tables and OLTP Database

- Thales Launches File Activity Monitoring to Strengthen Real-Time Visibility and Control Over Unstructured Data

- Sumo Logic’s New Report Reveals Security Leaders Are Prioritizing AI in New Solutions

June 12, 2025

- Databricks Expands Google Cloud Partnership to Offer Native Access to Gemini AI Models

- Zilliz Releases Milvus 2.6 with Tiered Storage and Int8 Compression to Cut Vector Search Costs

- Databricks and Microsoft Extend Strategic Partnership for Azure Databricks

- ThoughtSpot Unveils DataSpot to Accelerate Agentic Analytics for Every Databricks Customer

- Databricks Eliminates Table Format Lock-in and Adds Capabilities for Business Users with Unity Catalog Advancements

- OpsGuru Signs Strategic Collaboration Agreement with AWS and Expands Services to US

- Databricks Unveils Databricks One: A New Way to Bring AI to Every Corner of the Business

- MinIO Expands Partner Program to Meet AIStor Demand

- Databricks Donates Declarative Pipelines to Apache Spark Open Source Project

June 11, 2025

- What Are Reasoning Models and Why You Should Care

- The GDPR: An Artificial Intelligence Killer?

- Fine-Tuning LLM Performance: How Knowledge Graphs Can Help Avoid Missteps

- It’s Snowflake Vs. Databricks in Dueling Big Data Conferences

- Snowflake Widens Analytics and AI Reach at Summit 25

- Top-Down or Bottom-Up Data Model Design: Which is Best?

- Why Snowflake Bought Crunchy Data

- Change to Apache Iceberg Could Streamline Queries, Open Data

- Inside the Chargeback System That Made Harvard’s Storage Sustainable

- dbt Labs Cranks the Performance Dial with New Fusion Engine

- More Features…

- Mathematica Helps Crack Zodiac Killer’s Code

- It’s Official: Informatica Agrees to Be Bought by Salesforce for $8 Billion

- AI Agents To Drive Scientific Discovery Within a Year, Altman Predicts

- Solidigm Celebrates World’s Largest SSD with ‘122 Day’

- DuckLake Makes a Splash in the Lakehouse Stack – But Can It Break Through?

- The Top Five Data Labeling Firms According to Everest Group

- Who Is AI Inference Pipeline Builder Chalk?

- IBM to Buy DataStax for Database, GenAI Capabilities

- ‘The Relational Model Always Wins,’ RelationalAI CEO Says

- VAST Says It’s Built an Operating System for AI

- More News In Brief…

- Astronomer Unveils New Capabilities in Astro to Streamline Enterprise Data Orchestration

- Yandex Releases World’s Largest Event Dataset for Advancing Recommender Systems

- Astronomer Introduces Astro Observe to Provide Unified Full-Stack Data Orchestration and Observability

- BigID Reports Majority of Enterprises Lack AI Risk Visibility in 2025

- Databricks Announces Data Intelligence Platform for Communications

- MariaDB Expands Enterprise Platform with Galera Cluster Acquisition

- Snowflake Openflow Unlocks Full Data Interoperability, Accelerating Data Movement for AI Innovation

- Databricks Unveils Databricks One: A New Way to Bring AI to Every Corner of the Business

- Gartner Predicts 40% of Generative AI Solutions Will Be Multimodal By 2027

- Databricks Announces 2025 Data + AI Summit Keynote Lineup and Data Intelligence Programming

- More This Just In…